This post was originally written on Codeforces; relevant discussion can be found here.

Introduction

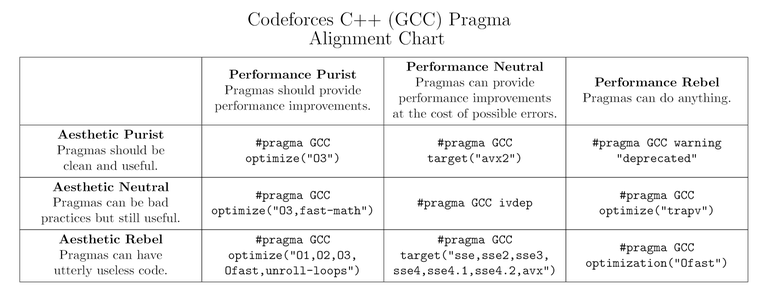

A while ago ToxicPie9 and I made and posted this meme:

To my surprise, many people are quite confused about it. In fact, I realized there are a lot of misconceptions about pragmas. Most C++ users on Codeforces put a few lines of pragmas at the start of every submission. However, I believe many of them don’t fully understand what they’re doing or how pragmas work; the only thing people seem to think is that “add pragmas -> code go brr”.

Pragmas are a form of the dark arts that are feared and used, both

correctly and incorrectly, by lots of competitive programmers. They are

widely believed to make your code much faster, but sometimes may lead to

slowdowns and even runtime errors on certain platforms. In this post, I

will explain the effects of #pragma GCC optimize and

#pragma GCC target, how they work, and how you should and shouldn’t

use them.

Feel free to skip to the end of the post for a TL;DR.

Warning

All of this discussion is for GCC, and the code you will write might not be portable across different compilers or platforms (in terms of turning on the same optimizations). However, you may assume that most of them work on Codeforces and other x86 platforms that are not too old.

Some Non-examples

The following does nothing.

#pragma GCC optimize(" unroll-loops")

#pragma gcc optimize("Ofast")

#pragma GCC optimization("Ofast")

#pragma optimize(Ofast)

Yes, these are real-life examples we have seen being used by many competitive programmers, including quite a few LGMs. Perhaps the third one among these came from this post which seems to have popularized the idea of using pragmas, but with a small mistake. Many people are misled to believe that some of the above work, when they actually don’t – stop using them.

Also, putting any optimization pragmas in the middle of the submission might end up not optimizing certain parts of your solution, so any pragmas must be added at the beginning of the submission.

<!– Also, `#pragma GCC optimize(“tune=native”)` and `#pragma GCC optimize(“arch=native”)` do nothing, so that’s kinda unfortunate. –>

If ever in doubt about whether your pragmas are correct, turn on most

compiler warnings with the command-line option -Wall (or the more

specific -Wunknown-pragmas). For example, if you compile the code

above with -Wall, you’ll get the following output, which tells you

that the pragmas are invalid and useless:

foo.cpp:2: warning: ignoring ‘#pragma gcc optimize’ [-Wunknown-pragmas]

2 | #pragma gcc optimize("Ofast")

|

foo.cpp:3: warning: ignoring ‘#pragma GCC optimization’ [-Wunknown-pragmas]

3 | #pragma GCC optimization("Ofast")

|

foo.cpp:4: warning: ignoring ‘#pragma optimize ’ [-Wunknown-pragmas]

4 | #pragma optimize(Ofast)

|

foo.cpp:1:37: warning: bad option ‘-f unroll-loops’ to pragma ‘optimize’ [-Wpragmas]

1 | #pragma GCC optimize(" unroll-loops")

| ^

Try to check if your submissions have similar problems. If yes, then you have probably been using pragmas wrong the entire time – it’s time to change your default code.

What Is “#pragma GCC optimize”?

It turns on certain optimization flags for GCC. The syntax is

#pragma GCC optimize (option, ...)

From the

official

source on GCC pragmas, this pragma allows you to set global

optimization flags (specified by option) for functions that come after

it. Let’s have a look at a few of them:

O0,O1: These are pretty useless for competitive programming purposes, so we won’t discuss these here.O2: This is the default optimization option on Codeforces, so using this might not give any tangible benefit.O3: This is the first non-trivial optimization option. It can make your code slower sometimes (due to the large size of generated code), but it is not very frequent in competitive programming. Some of the things it does are:- Auto-vectorize the code if the mentioned architectures allow it. This can make your code much faster by using SIMD (single instruction, multiple data) which kinda parallelizes your code on an instruction level. More info below.

- Function inlining — inlines functions aggressively if possible (and no, marking functions as inline doesn’t inline functions, nor does it give hints to the compiler)

- Unrolls loops more aggressively than

O2(this might lead to instruction cache misses if generated code size is too large)

Ofast: This is one of the more controversial flags. It turns on all optimizations thatO3offers, along with some other optimizations, some of which might not be standards compliant. For instance, it turns on thefast-mathoptimization, which assumes floating-point arithmetic is associative (among other things), and under this assumption, it is not unexpected to see your floating-point error analysis go to waste.Ofastmay or may not make your code faster; only use this if you’re sure it does the right things.

You can also use some other options, like:

unroll-loops– Enables aggressive loop unrolling, which reduces the number of branches and optimizes parallel computation, but might increase code size too much and lead to instruction cache misses.unroll-all-loops– Usually makes the program slower.strict-overflow– Enables some optimizations that take advantage of the fact that signed integer overflow is undefined behavior.trapv– This one is quite special, as it is not usually considered an “optimization”: enabling this will make your code run much slower, but causes signed integer overflows to generate runtime errors. Useful for debugging. (Editor’s note: if your system supports, it’s recommended to use a sanitizer instead. You can find tutorials on the internet, so we won’t discuss it here.)

A full list of supported flags in GCC can be found here.

An example: let’s say you want to use O3 and unroll-loops. Then you

could do something like

#pragma GCC optimize("O3")

#pragma GCC optimize("unroll-loops")

or

#pragma GCC optimize("O3,unroll-loops")

or

#pragma GCC optimize("O3","unroll-loops")

Note that the options are strings inside quotation marks, and there’s no space after or before the comma in the second way of writing. However, you can have a space before or after the comma in the last way of writing without any issues.

What Is “#pragma GCC target”?

Many modern computers and almost all competitive programming judges run on the x86 architecture. It has received a lot of additions over the years – particularly, extra features (instructions) that enable different calculations or make existing ones much faster.

Compilers allow you to take advantage of these instruction sets

extensions. For example, CPUs that support the popcnt instruction can

theoretically compile __builtin_popcount into one instruction, which

is much faster than usual implementations of this function. Similarly,

if you want to auto-vectorize code, you’d need some instruction sets

that actually support the vector instructions. Some of these instruction

sets are sse4.2, avx, avx2. Here’s a list of instruction sets that

are usually supported on Codeforces:

avxandavx2: These are instruction sets that provide 8, 16 and 32 byte vector instructions (i.e., you can do some kinds of operations on pairs of 8 aligned integers at the same time). Prefer using avx2 since it’s newer.sse,sse2,sse3,sse4,sse4.1,sse4.2: These are instruction sets that are also for vectorization, but they’re older and not as good as avx and avx2. These are useful for competitions on websites such as Yandex, where avx2 is not supported and gives a runtime error due to unrecognized instruction (it corresponds to a SIGILL signal — ill-formed instruction).popcnt,lzcnt— These optimize the popcount (__builtin_popcountfamily) and count leading zeros (__builtin_clzfamily) operations respectively.abm,bmi,bmi2: These are bit manipulation instruction sets (note thatbmiis not a subset ofbmi2). They provide even more bitwise operations likectz,blsi, andpdep.fma: This is not so widely used, since avx and sse make up for most of it already.mmx: This is even older than the sse* family of instruction sets, hence is generally useless.

You should be quite familiar with them if you have written SIMD code before. If you’re interested in a certain instruction set, there are plenty of resources online.

An example: if you want to use, say, avx2, bmi, bmi2, popcnt and

lzcnt, you can write

#pragma GCC target("avx2,bmi,bmi2,popcnt,lzcnt")

Again, note that we don’t have spaces in the string.

There are two main ways to use #pragma GCC target.

- You can use it with the optimization pragmas. It allows the compiler to automatically generate efficient SIMD instructions from parts of your code (based on the optimization flags), often boosting their performance by roughly 2, 4 or even 8 times.

- It enables you to use the intrinsics of supported instruction sets. For example,

#include <immintrin.h>

// returns the odd bits of x: 0b01010101 -> 0b1111

uint32_t odd_bits(uint32_t x) {

return _pext_u32(x, 0x55555555u);

}

This code works very efficiently, but won’t compile unless you specify a

valid target. Normally this is done by adding the -mbmi2 compilation

flag, but this is impossible on Codeforces, so you can only enable it by

other ways like #pragma GCC target("bmi2").

- An extreme example of this is this super-fast convolution code that makes heavy use of SIMD intrinsics to squeeze the running time of an \(\mathcal{O}(N \log N)\) algorithm with a traditionally bad constant factor with \(N \approx 5 \times 10^5\) to only 49ms.

Note that using vectorization targets won’t work unless you have O3 or

Ofast on (more specifically, tree-vectorize, with at least=O2= –

note that O2 is turned on by default on Codeforces).

Function Attributes

Just in case you don’t want to apply these optimizations globally, you can use function attributes. For instance,

__attribute__((target("avx2"), optimize("O3", "unroll-loops"))) void work() {

// do something

}

This enables these attributes for this function only, and the rest of the code will be compiled with the global options.

Bonus

#pragma GCC ivdep: This pragma forces the compiler to vectorize a loop

if the loop was not vectorized due to possible aliasing. This means that

the compiler wasn’t able to prove that vectorizing is safe due to data

dependency, but you can, due to knowledge of the problem at hand, show

that this case will never happen. This results in undefined behaviour if

you’re not careful enough about there not being a dependency across loop

iterations. Think of this having a similar idea behind it as pointer

restrict in C99.

#pragma GCC push_options, #pragma GCC pop_options: These maintain a

stack of target and optimization pragmas, enabling you to temporarily

switch to another set of options. #pragma GCC reset_options resets all

the options.

#pragma GCC optimize "Ofast" and #pragma GCC optimize "-Ofast" also

surprisingly work. The same holds for stuff like

#pragma GCC optimize "-funroll-loops" and

#pragma GCC optimize "unroll-loops". However,

#pragma GCC target "avx2" works but #pragma GCC target "-mavx2"

doesn’t.

Some Caveats

As we have pointed out already, there might be some caveats associated with using the aforementioned pragmas.

- Some of these optimize/target pragmas can potentially make your code slower too, due to code size and other associated stuff.

- You might get runtime errors if you’re careless about your choice of

instruction sets. In general, on any platform, you should try to use

each instruction set (and also write code that benefits from that

instruction set!) and see if you’re getting weird behaviour or not.

There are also some ways to check if the machine your code is going to

be run on has certain instruction sets at compile time, but

inconsistencies with the actual set of instruction sets are possible

in subtle ways, so you shouldn’t in general depend on them. A good

way, however, is to run custom tests with stuff like

assert(__builtin_cpu_supports("avx2")). - In general, constant-factor optimization at such a low level is somewhat unpredictable, and you should rely on measuring the performance rather than guessing from the instruction counts/latencies. This also means taking the contents of this post with a grain of salt and not blindly believing that using these pragmas will somehow help you cheese every \(\mathcal{O}(n^2)\) solution into the time limit for \(n = 10^5\). A good way to look at generated assembly is by using godbolt.org.

Some Examples

There are multiple incidents in codeforces folklore that point to how fast pragmas can make your code. Some instances are:

- https://codeforces.com/blog/entry/89640

- https://codeforces.com/blog/entry/66279

- https://codeforces.com/blog/entry/81467 (unroll-loops isn’t actually used)

Some more helpful discussion can be seen in the thread for this comment: https://codeforces.com/blog/entry/94609?#comment-836718

Conclusion/TL;DR

If you skipped the entire post to read this part, it’s recommended that you also read the Some Non-examples section and check if you’re using an incorrect pragma.

No pragma is absolutely safe to use. Don’t blindly believe that one line of pragma can suddenly, magically boost the speed of all your submissions. Often, you will need some testing or researching to decide how to improve your constant factor.

Do remember that for the sake of optimizing all parts of your program, all pragmas must be present at the beginning of the submission, even before the includes.

When restricted to competitive programming on Codeforces or a fixed platform, however, some results might be more predictable. Specifically,

- The default

O2already has a lot of optimizations compared toO0. Increasing it toO3or evenOfastmight increase or decrease the performance, depending on your code and input. Most online judges provide a “custom test” function which helps you figure out which one is more appropriate. You can also test other flags if you know what they do. - Usually, the

avxandavx2targets are supported by newer online judges. When they are supported, these can usually make your code faster. They often work best when your code is easily vectorizable (e.g., tight loops) – which requires some experience to write. Again, custom tests are always useful (also, make sure they don’t cause errors due to architecture issues). You can try thessefamily if they don’t work. - Other targets like

popcntandbmidon’t usually matter a lot when the compiler is optimizing, however operations like__lg,popcount, etc. require them to be fast. This is important when you use bitsets or do heavy bit manipulations.

Some pragmas I personally use on Codeforces are:

#pragma GCC optimize("O3,unroll-loops")

#pragma GCC target("avx2,bmi,bmi2,lzcnt,popcnt")

If on a platform with no avx2 support, I switch out the avx2 with either avx or one of the sse’s. Some (ancient) judges might not have support for the other targets either, so it’s usually a decision you need to make as mentioned in the post.

Acknowledgments

Thanks to ToxicPie9 for helping me write this post and suggesting both stylistic changes as well as things to include! Please give him upvotes.

Changelog

Apologies for necroposting, but we saw a lot of people still using pragmas incorrectly, and found that there were some inadvertent omissions in the previous version of the text above that do not handle those cases. Here is the list of changes:

- Added the fact that all pragmas must be present at the beginning of the submission.